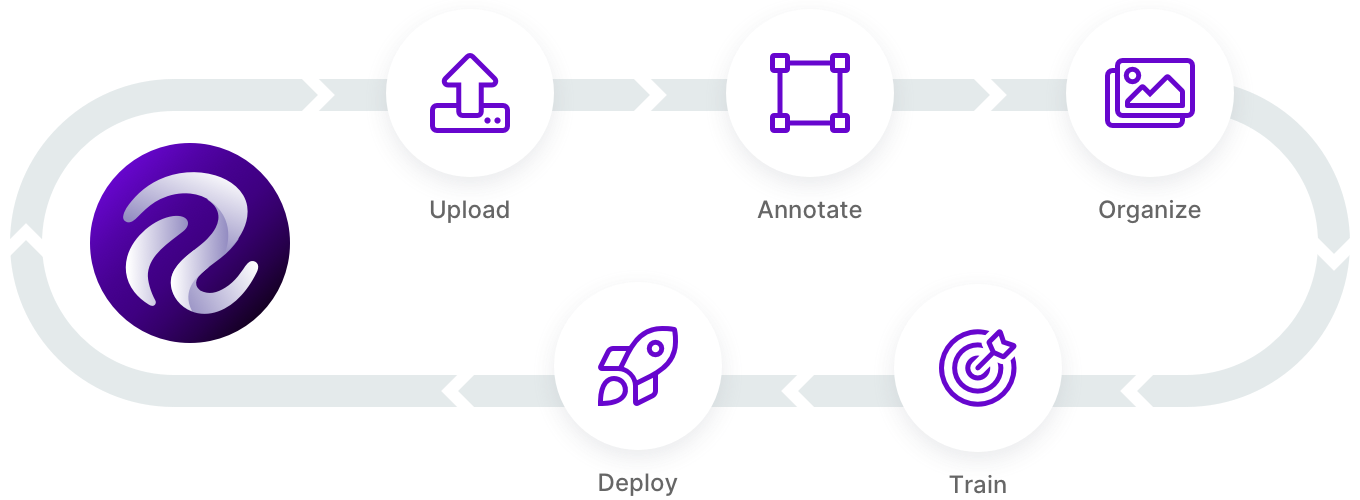

You've built your first model and plan to get it deployed to production. Now what?

Like any software, the computer vision model needs to be continuously improved for potential edge cases. This process actually starts before we bring our model into production, and it continues after deployment. Our models are not going to be perfect – and that's ok. We should be building systems that allow fault tolerance and continued improvement.

We built the Roboflow pip package to make automating active learning easy and the hosted Active Learning UI simple to configure

As a refresher, active learning is the process by which we identify examples in our dataset that will improve our model's learning more quickly. For example, if you're building a package detection model, you may find the model starts to do very well on common package shapes (boxes) but needs to be fed more data of less common packages (yellow flat packs). (In case you missed it, we've walked through what is active learning with a package example like this in mind.)

The principle question of active learning becomes: how can we identify which data points we should prioritize for (re)training? More simply put, which images will improve our model's capabilities faster.

Model failure comes in multiple forms. Let's return to the package detection model example. Perhaps our model identifies objects on our doorstep that are not packages as packages – false positive detections. Perhaps our model fails to identify that there was a package on our doorstep – false negatives. Perhaps our model identifies packages, but it is not very confident in those detections.

In general, there should always be continued monitoring (and data collection) of our production systems. Here's a few strategies we can employ to improve continuous data collection.

We've discussed active learning on Roboflow YouTube too. Subscribe: https://bit.ly/rf-yt-sub

1. Continuously Collect New Images at Random

In this example, we would sample our inference conditions on a regular interval regardless of what the model saw in those images. Pretend we have a model that is running on a video feed. With continued random dataset collection, we may grab every 1000th frame and send it back to our training dataset.

Random data collection has the advantage of helping catch false negatives, especially. Because we may not have known where the model failed, a random selection of images may include those failure cases.

On the other hand, random data collection is, well, random. It's not particularly precise, and that can result in searching a haystack of irrelevant images for our needle of insight.

2. Collect New Images Below A Given Confidence Threshold

When a model makes a prediction, it provides a confidence level for that prediction. We can set some acceptable confidence criteria for model predictions. If our model's prediction is below that threshold, it may be a good image to send back to our training dataset.

This strategy has the advantage of focusing our data collection on places that may include model failure more clearly. It's an easy, low-risk optimization for improving our data collection.

However, this strategy won't catch all false positives or any false negatives. False positives could, in theory, be so confidently false from the model that they exceed our threshold. False negatives would not have a confidence associated; there's no prediction in these cases.

3. Solicit Your Application's Users to Verify Model Predictions

Depending on the circumstances of your model, you may be able to leverage users that are interacting with the model for their confirmation or rejection of model outputs. Pretend you're building a model that helps pharmacists count pills. Those pharmacists may have oversight into the model's predictions compared to their perceived counts. The application using the vision model could include a button to say that a given count looks incorrect, and that sample could be sent back for continued training. Even better, when a user doesn't say something looks incorrect, that sample could be used for continued retraining in the form of affirmation of model performance.

The advantage to this strategy is that it includes a human-in-the-loop at the time of production usage, which significantly reduces the overhead to checking model performance.

Of course, this strategy only works if a model is interacting with end user input, which may not be the case for things like remote sensing or monitoring. In any case, systems that remove human need to review images or video commonly have a phase of blending model and human inference before going fully autonomous where this strategy can be employed.

Incorporating Active Learning into Your Applications

All of the above strategies rely on automated ways of sending images of interest back to a source dataset – which is made possible using pip install roboflow.

Roboflow also includes an Upload API wherein you can programmatically send problematic images or video frames back to your source dataset. (Note: in cases where your model is running completely offline, you can cache the images until the system may have a regular interval of external connectivity to send images.)

In addition, any model trained with Roboflow includes the model confidence per image (classification) or per bounding box (object detection). These confidences can be used for inferring which images may be good ones to use for continued model improvement.

As always, happy building!